I realized something lately. The blog does not have nearly enough stir-fries considering how much I enjoy them.

I view stir-fries as the easiest, tastiest way to use up whatever produce you have on hand. Just serve over quinoa or brown rice and you have a seriously hearty, vegetable-packed, nutritious meal.

When I want something super hearty, I look no further than portobello mushrooms.

Can I tell you a secret? My absolute favorite portobello mushroom recipe at the moment is our Balsamic Portobello Burgers. Think juicy portobello burgers with a creamy vegan garlic aioli sauce. It’s so good my eyes rolled back in my head when I took my first bite.

Since then, my affection for portobellos has grown into full blown obsession. I had to put them in a stir-fry – there was no question.

Origin of Stir Frying

It’s thought that the cooking method of stir frying originated in China. Traditionally, stir fries are cooked in a wok with a small amount of hot oil and continuously stirred. But since we don’t have a wok (and chances are you don’t either), we used a cast iron skillet for this recipe.

You can take a deeper dive into the history of stir frying here.

How to Make This Mushroom Stir-Fry

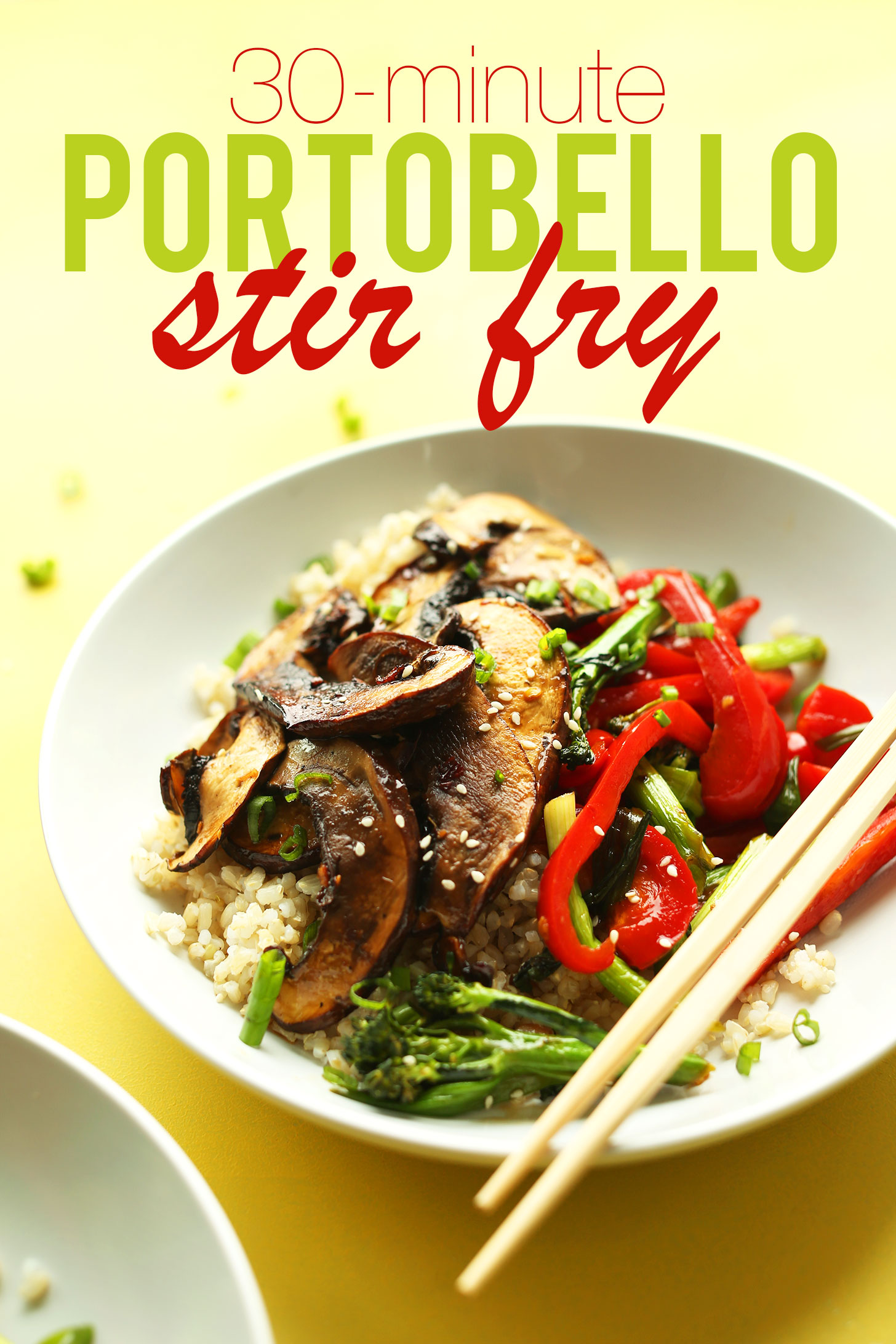

This stir fry requires 10 basic ingredients and comes together in about 30 minutes!

The marinade is the perfect balance of savory, citrusy, and sweet. And the mushrooms soak it all up and get incredibly juicy and flavorful.

Once your portobellos have had a few minutes to marinate, it’s onto the stovetop, where they get a sear on both sides and begin to slightly caramelize. Oh mama.

For vegetables, I went with red bell pepper and broccolini. But I think you could use pretty much anything you have on hand! This is a very flexible, adaptable recipe that delivers big on flavor.

I hope you love this stir fry! It’s:

Hearty

Flavorful

Vegetable-packed

Nourishing

Simple

& Delicious

This would make the perfect plant-based meal when you’re craving something hearty but healthy. I think it would be especially great for hosting because just about everyone loves stir-fry and it’s so satisfying no one will realize they’re eating an entirely vegan/gluten-free meal.

If you give this recipe a try, let us know! Leave a comment, rate it, and don’t forget to tag a picture @minimalistbaker on Instagram! We love seeing what you come up with. Cheers, friends!

30-minute Portobello Mushroom Stir-Fry

Ingredients

MARINADE/SAUCE

- 2 cloves garlic, minced (2 cloves yield ~1 Tbsp or 6 g)

- 2 tsp minced ginger

- 3-4 Tbsp maple syrup (or agave nectar or coconut sugar)

- 1/2 tsp red pepper flakes (more or less to taste)

- 3-4 Tbsp tamari (or soy sauce if not gluten-free // more or less to taste)

- 1 Tbsp sesame oil (toasted or untoasted)

- 3 Tbsp lime juice

- 1 Tbsp water

VEGETABLES

- 2 portobello mushrooms (~20 g each)

- 1 medium red bell pepper (thinly sliced)

- 1 cup chopped broccolini

- 1 cup chopped green onion (optional)

FOR SERVING optional

- 4 cups cooked brown rice or cauliflower rice

- 1 tsp sesame seeds

Instructions

- Cook brown rice (or cauliflower rice) if serving with stir-fry.

- Next, wipe portobello mushrooms clean with a slightly damp towel (do not immerse in water or they will get soggy) and slice into thin strips (see photo).

- Prepare marinade by adding all ingredients to a small mixing bowl and whisking to combine. Taste and adjust flavor as needed, adding more ginger for brightness, lime juice for acidity, tamari for saltiness, red pepper flakes for heat, or maple syrup for sweetness.

- Add portobello mushrooms to a large shallow dish, such as a 9×13-inch baking pan, and top with marinade. Gently stir/toss to combine. Set aside to marinate for 10-12 minutes while you prep your vegetables. Toss occasionally to evenly coat.

- Chop vegetables and set aside. Once portobellos have marinated, heat a large skillet over medium heat and add a bit of sesame oil. Then add only as many portobellos as will fit comfortably in the pan (see photo), and sauté for 2-4 minutes on each side or until golden brown and slightly seared. You may have to do this in two batches depending on the size of your pan.

- Set portobellos aside and loosely cover to keep warm. Then add red pepper and broccolini to the pan and increase heat to medium-high. Sauté for 2-3 minutes, stirring frequently.

- Add the green onion (optional) and any remaining portobello marinade and toss to coat. Cook for 1 minute. Then remove from heat and serve immediately. Cooking the vegetables for a short time on very high heat will give them a nice sear and prevent them from getting soggy.

- Enjoy as is or with chili garlic sauce, sesame seeds, or a garnish of chopped green onion. Best when fresh, though leftovers keep in the refrigerator up to 2-3 days. Reheat in the microwave or the stovetop.

Notes

Nutrition (1 of 3 servings)

Makay says

This was so yummy! I already can’t wait to make it again.

Yay! We’re so happy to hear this, Makay. Thank you for sharing! xo

Deb says

Can I use regular broccoli instead of broccolini?

They don’t have it at my store.

That should work! You may need to cook it slightly longer though, so we would suggest cutting it pretty small and/or adding it a minute or two before the bell peppers. Hope that helps!

Anne says

It really looks like it’s missing a protein source. For that reason, I’d be hungry after eating this. I suppose you go ahead and tofu or some garbanzo beans but this alone looks like a side dish and not a meal.

Hey Anne! You could definitely add a protein sorce to this!

Chris says

It looks perfect to me. I’m not vegan or vegetarian, but Americans obsession right now for protein at every meal is ridiculous. We get plenty.

Donna says

I made this the other day and my family loved it! Everyone wants me to start using this sauce for our vegetarian noodle stir fries. Can you tell me how long the sauce will keep in the fridge?

Thanks Donna

Amazing! We’re so glad you and your family enjoy it, Donna! The sauce should keep for about 1 week, possibly longer. The lime juice is the ingredient we think will go bad first. You could probably extend that time further by switching the lime juice to a lesser amount of rice vinegar. Hope that helps!

Carol says

Simple and delicious. Loved it!!!

Yay! We’re so glad to hear it, Carol. Thank you for the lovely review! xo

Ann says

This recipe was delicious! The sauce was so flavorful and easy to make I used ingredients I had on hand and used fresh lemon juice instead of lime but it was heavenly. Love how the citrus flavor comes through making it so fresh. I will be making this again soon.

We’re so glad you enjoyed it, Ann! Thanks so much for the lovely review. xo

Miranda says

Never tasted a mushroom so good!! Yes, sauce was amazing. Thanks for all you do!

Thank you so much for your kind words and lovely review, Miranda! xoxo

Mark S. says

First time ever making a plant-based entree. I followed the directions exactly, using the green onions, and adding the toasted sesame seeds for garnish. It was so delicious – my wife and I just love the dish! Looking forward to making your other recipes. Thank you for posting!

We’re so glad you and your wife enjoyed the recipe, Mark. Thank you for sharing your experience!

Natalya says

I never leave comments on cooking blog posts, but the sauce left me SPEECHLESS! thank you!!!

Whoop! We’re so glad you loved it, Natalya. Thank you for taking the time to leave a review! xo